Confluent CCDAK - Confluent Certified Developer for Apache Kafka Certification Examination

You need to consume messages from Kafka using the command-line interface (CLI).

Which command should you use?

(Which configuration is valid for deploying a JDBC Source Connector to read all rows from the orders table and write them to the dbl-orders topic?)

The producer code below features a Callback class with a method called onCompletion().

When will the onCompletion() method be invoked?

Clients that connect to a Kafka cluster are required to specify one or more brokers in the bootstrap.servers parameter.

What is the primary advantage of specifying more than one broker?

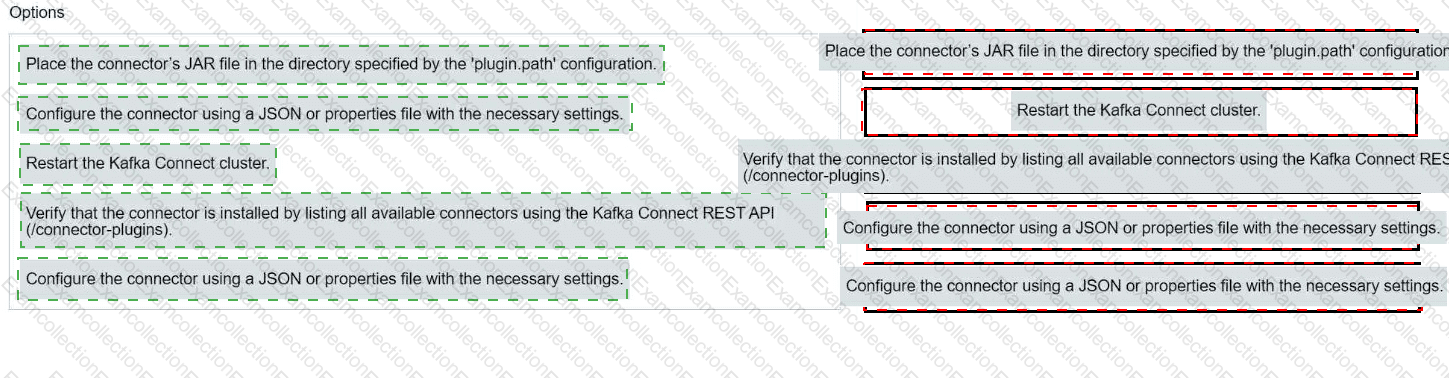

Match each configuration parameter with the correct deployment step in installing a Kafka connector.

Which two statements are correct about transactions in Kafka?

(Select two.)

You have a topic t1 with six partitions. You use Kafka Connect to send data from topic t1 in your Kafka cluster to Amazon S3. Kafka Connect is configured for two tasks.

How many partitions will each task process?

(A stream processing application tracks user activity in online shopping carts, including items added, removed, and ordered throughout the day for each user.

You need to capture data to identify possible periods of user inactivity.

Which type of Kafka Streams window should you use?)

Which is true about topic compaction?

(You are developing a Java application that includes a Kafka consumer.

You need to integrate Kafka client logs with your own application logs.

Your application is using the Log4j2 logging framework.

Which Java library dependency must you include in your project?)